Background

The goal was to redesign the campaign creation flow to enhance UX, increase task success, and minimize backend changes. Initial benchmarking revealed critical usability issues—users spent 16.28 minutes per task with only 65% success rate and rated difficulty at 3.4/7.

A UX benchmarking study established baseline metrics and identified where new users struggled with core flows.

Metrics | Initial design |

|---|---|

Time on task | 16.28 min |

Success rate | 65% |

Difficulty (SEQ) | 3.4/7 |

My Role

As Lead Designer, my focus was on driving this redesign from discovery through delivery, collaborating with a product manager, UX writer, and development team to prioritize user experience in every decision.

Key responsibilities:

Led end-to-end redesign through discovery, design, development, and delivery

Conducted research, facilitated workshops, ran user interviews, and built journey maps

Analyzed data to identify user needs and validated decisions through usability testing

Created user-centered solutions through wireframes, prototypes, and visual designs within technical constraints

Established UI standards through cross-functional collaboration

Contributed to quarterly strategy and roadmap planning

Built a design system to accelerate onboarding and reduce confusion

Research

The first step was assessing how users create campaigns to identify improvements.

Research activities included:

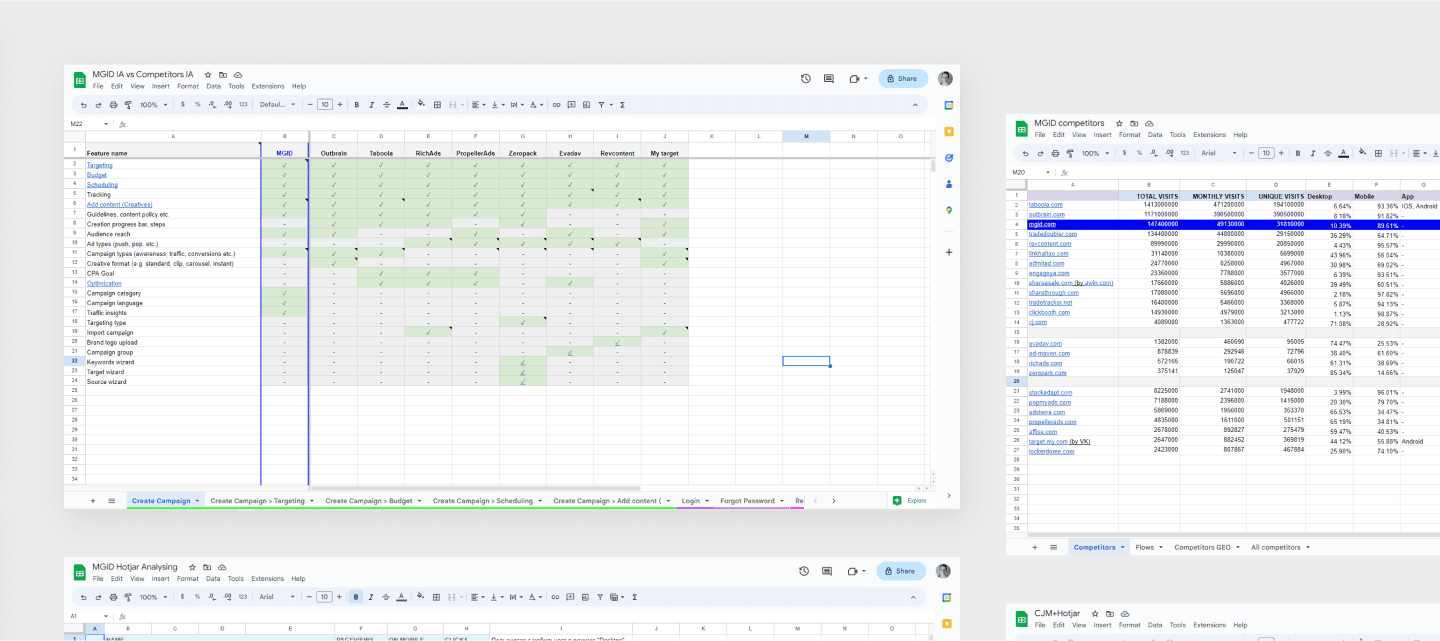

Competitor audits — Surveyed experts, used SimilarWeb Pro to analyze top 10 competitors, compared their IA to MGID's, identified improvement opportunities

Heatmap analyses — Analyzed Hotjar data and session recordings to validate assumptions and uncover insights

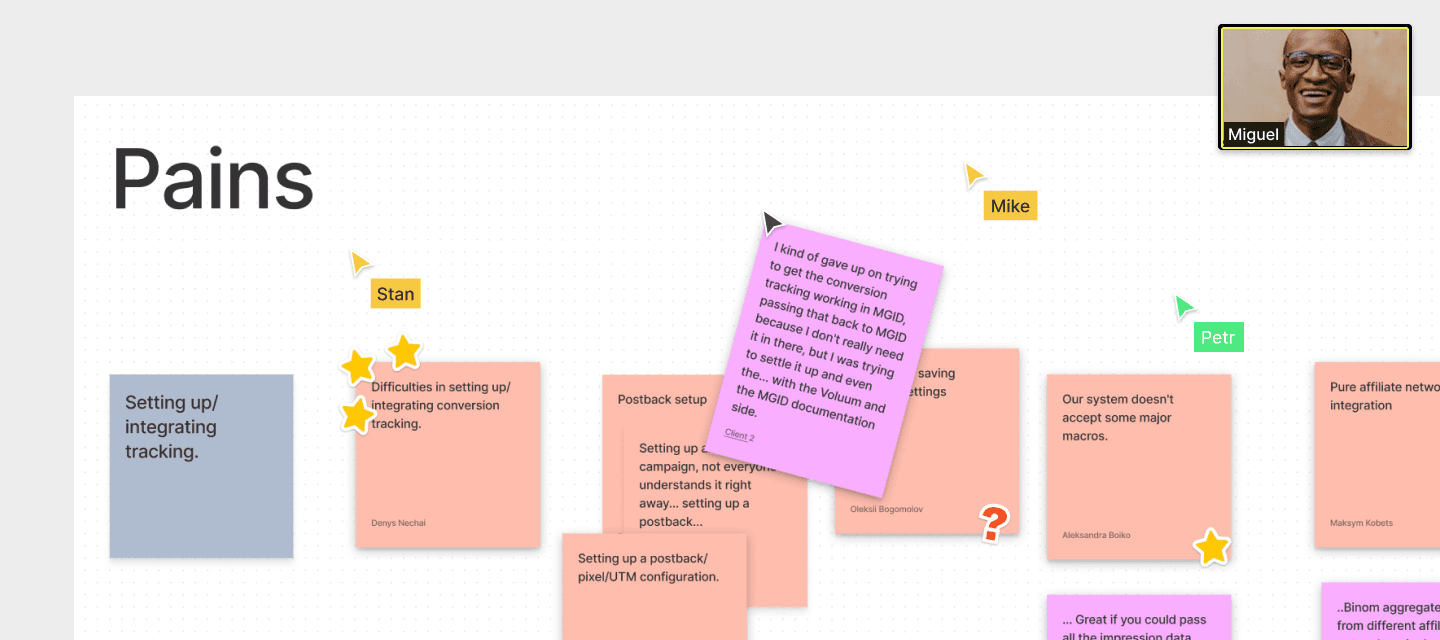

Workshops — Facilitated sessions with product manager and subject-matter experts (affiliates, media buyers, brands) to map customer journeys and identify pain points

User interviews — Validated hypotheses and gained deeper understanding of affiliate workflows

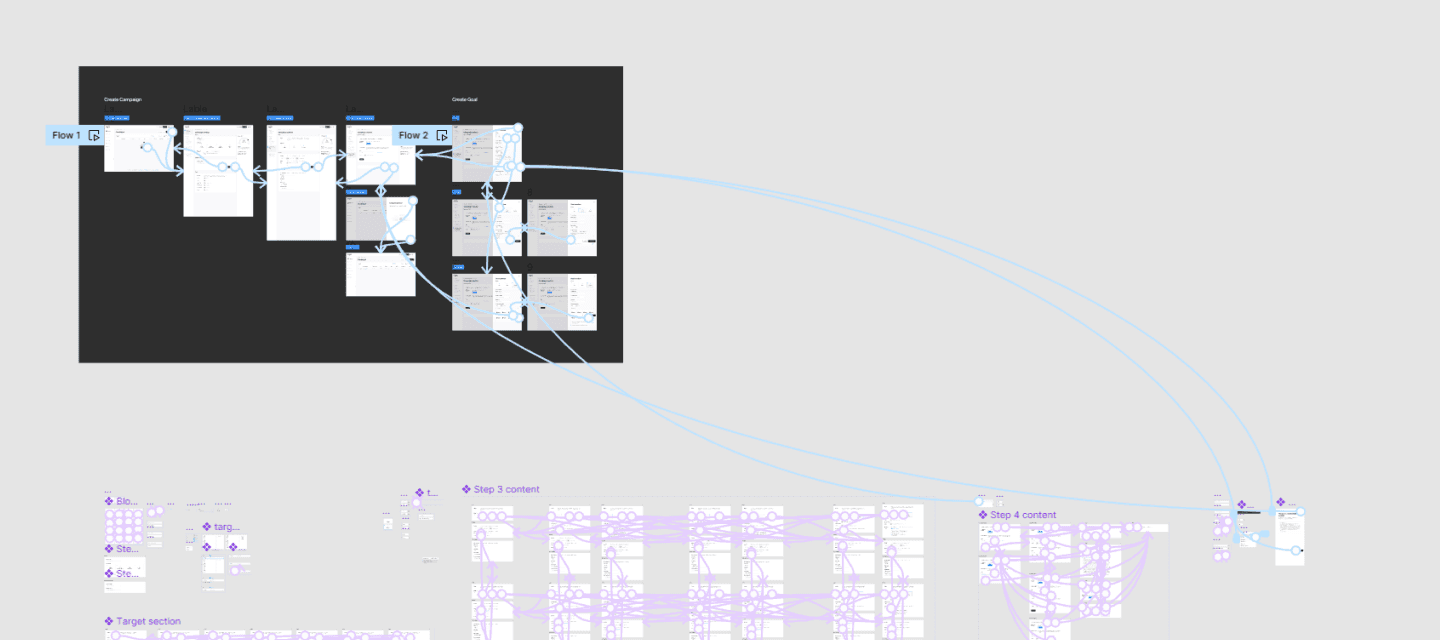

Wireframes & Prototyping

After establishing design direction, the next phase involved creating wireframes and updating user flows. Designs were presented to users and stakeholders for testing and feedback, then refined based on their input.

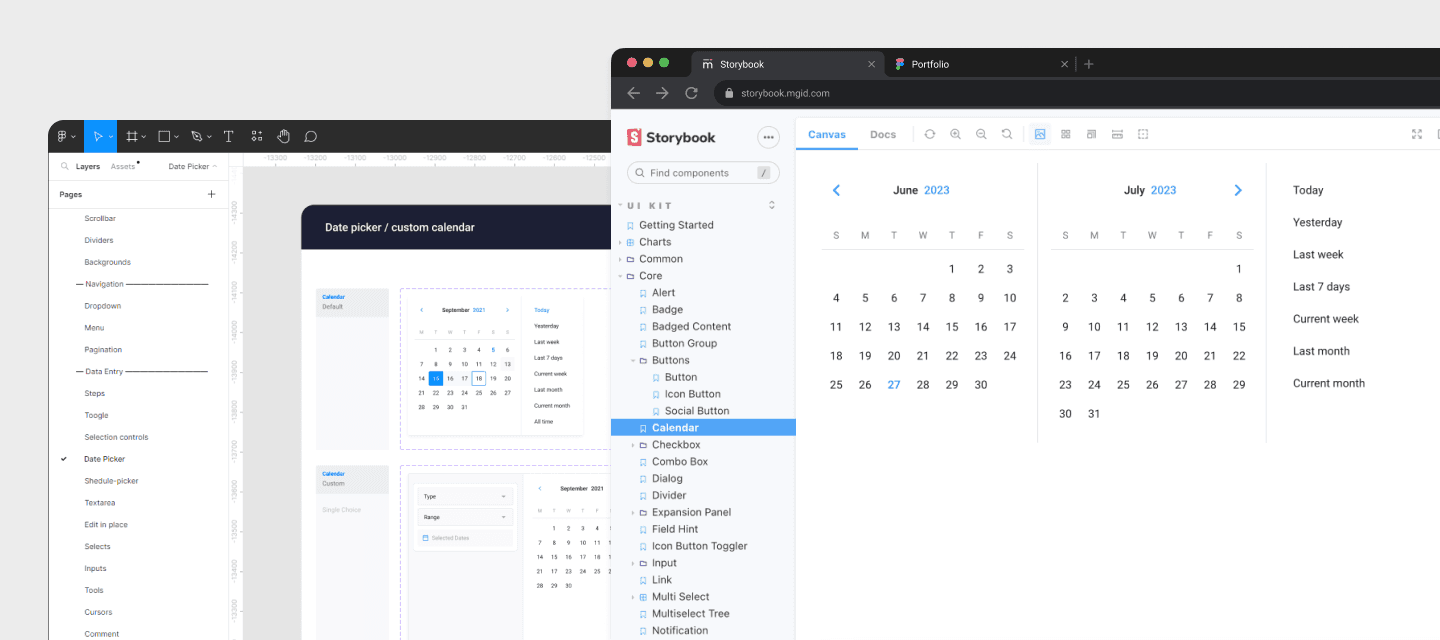

Design system integration

A component library was created to streamline development and improve efficiency. The platform lacked consistency, which slowed work, complicated collaboration, and created usability issues.

Research from Anja Klüver at Prospect showed that design systems can make projects 30% faster and 30% cheaper.

Benefits achieved:

Reduced learning curve through predictable interfaces

Freed time for research and complex problem-solving

Enabled future scalability

Saved 50% time on common patterns

Increased development efficiency by 25%

Accelerated prototype building by 25%

Streamlined onboarding for new designers and developers

Campaign Performance

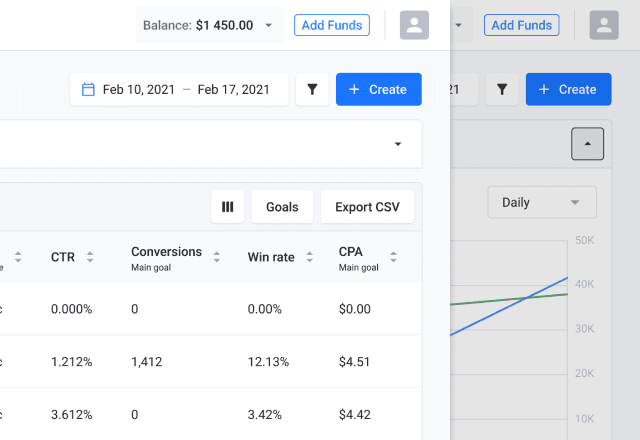

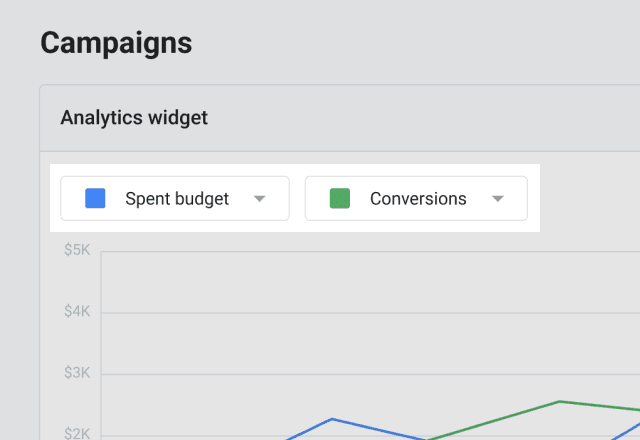

The objective was simplifying the page without compromising functionality or blocking new features.

Key improvements:

Relocated Advertiser/Publisher switch to user profile

Added collapsible graph based on user preference for table data

Made graph dynamic for custom metric comparison

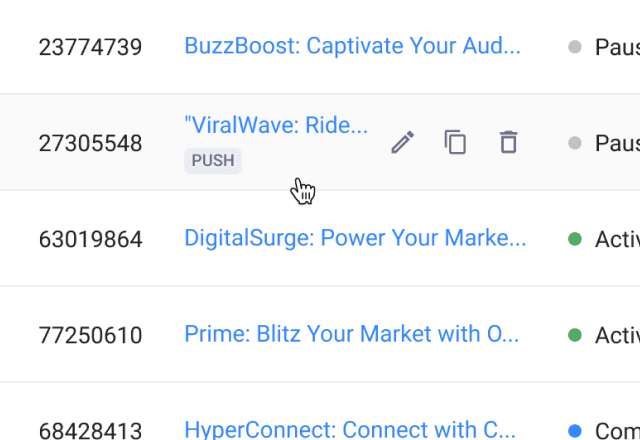

Restructured campaign actions—hid secondary options on hover, moved Statistics/Optimization/Ads to campaign page

Following that, I focused on improving the Campaign performance page's functionality with the following enhancements:

Users now have the option to collapse the graph, based on research indicating their preference for focusing on the data in the table. |  |

Collaborating with a product owner, I enhanced the graph's functionality, making it dynamic and enabling users to select preferred metrics for comparison. |  |

In pursuit of a smoother user-flow, I restructured the actions for each campaign in the table, concealing secondary actions under hover and relocating "Statistics," "Optimization," and "Ads" to the campaign page. |  |

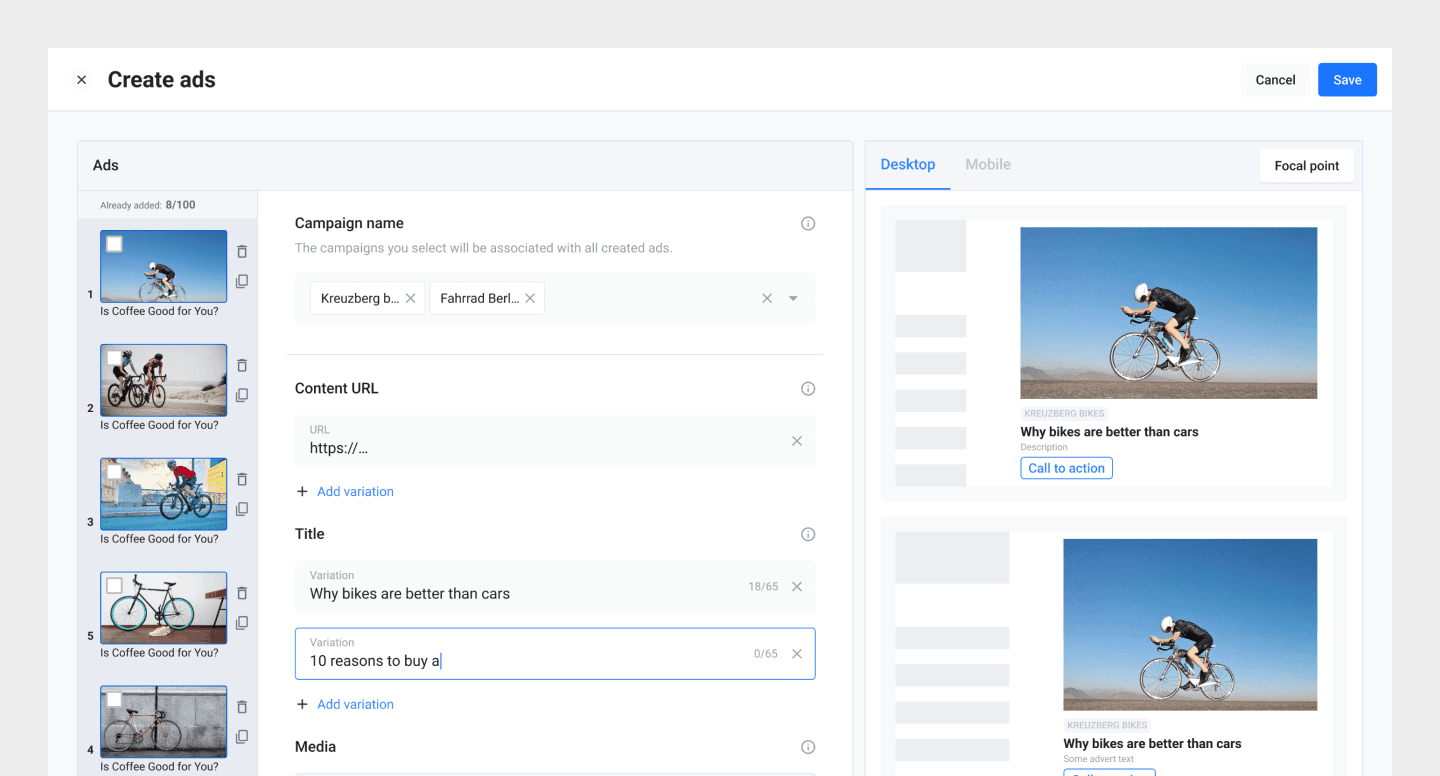

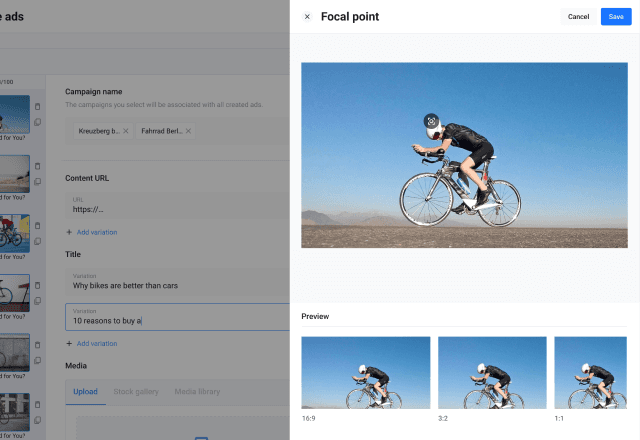

UX research found most users, especially affiliates, create multiple variations for each ad during a single session by combining different text and image options for optimal conversions.

The previous interface slowed this process, requiring users to redo the ad creation flow for each image-text pair. In order to streamline the ad creation process we've introduced variative ad creation. Now, a range of text-image pairings are auto-generated, substantially enhancing efficiency and fostering smoother workflow.

Updated Ads creation interface with ads variations

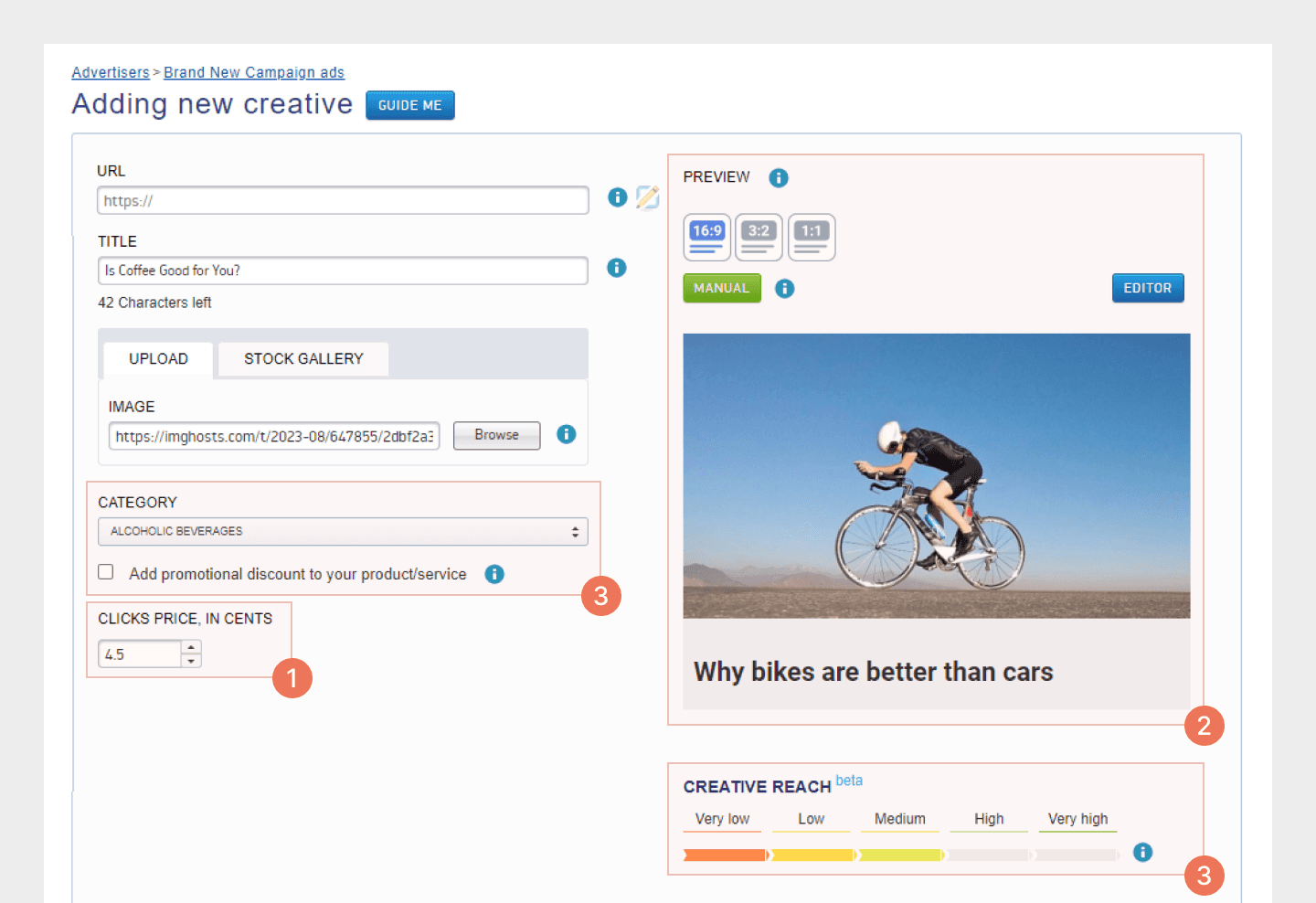

The research findings have also revealed additional challenges that users come across during the process of creating ads:

Ambiguities in certain features (Category Selection, Teaser Rich, Read Metadata)

Unclear image focal point configurations and size adjustments.

Absence of a distinct preview for various image formats.

In order to address these issues, I directed my efforts towards enhancing the flow of ad creation by implementing the following improvements:

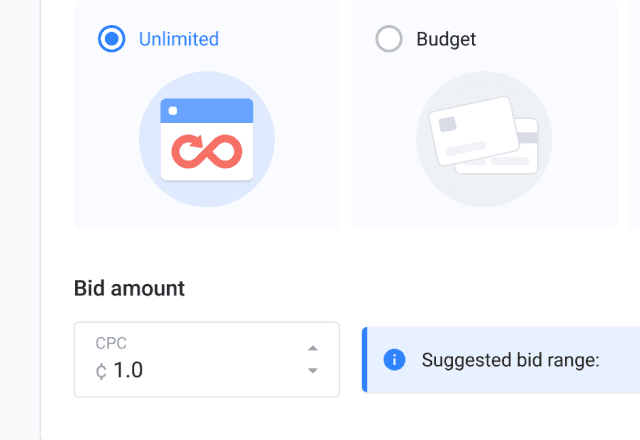

1. To streamline ad creation, we relocated CPC selection to the “Limits & Schedule” in campaign level. This allows users to customize it for individual ads in the ad interface. |  |

2. We moved the focal point settings to a dedicated screen and introduced a preview of all available formats for the chosen teaser on the ad edit page. This empowers users to instantly view how the teaser appears on the content page and easily fine-tune the image center for various formats. |  |

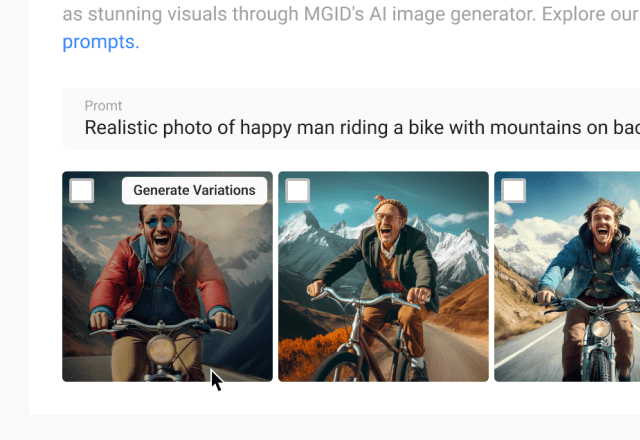

3. We implemented time-saving functionalities such as Title Recommendation and AI-generated images, and eliminated redundant fields effectively streamlining the Ads creation process. |  |

Campaign Creation

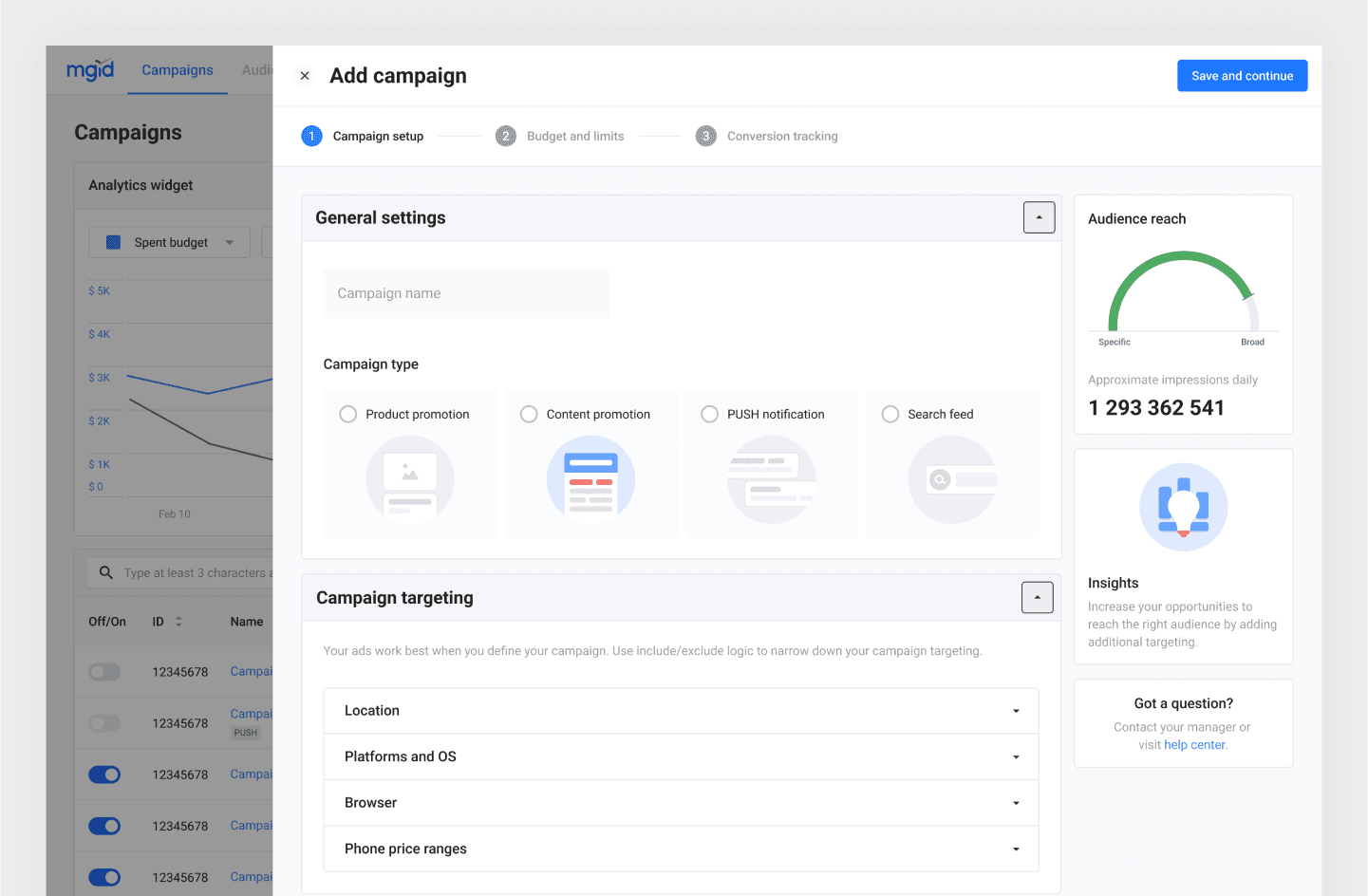

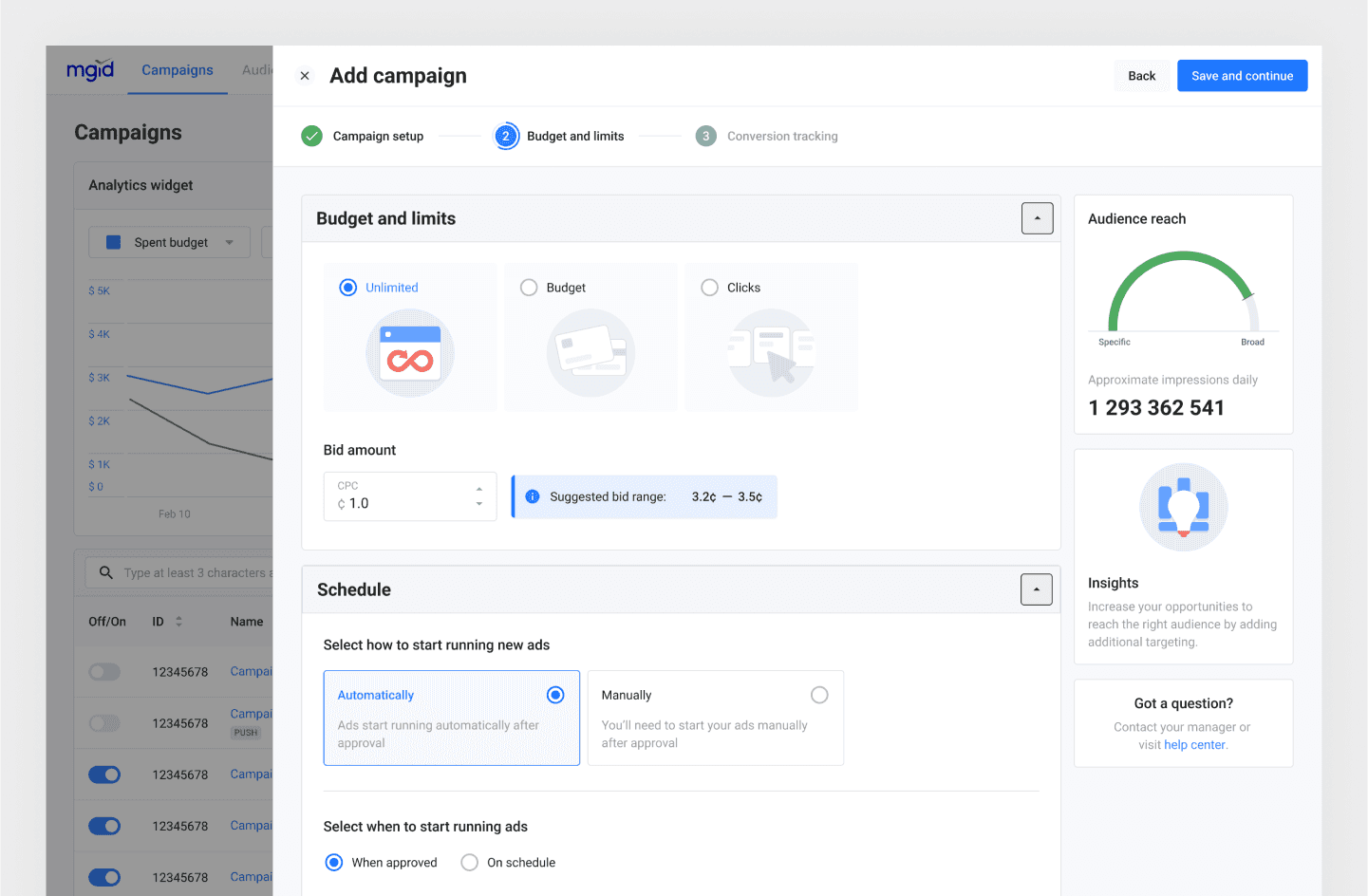

The focus here was streamlining the process to address major pain points: complex fields, confusing errors, no draft saving.

Initial improvements:

Split creation into three clear steps with progress tracking

Added auto-save and inline validation to prevent data loss

Moved errors to relevant fields with clearer messaging

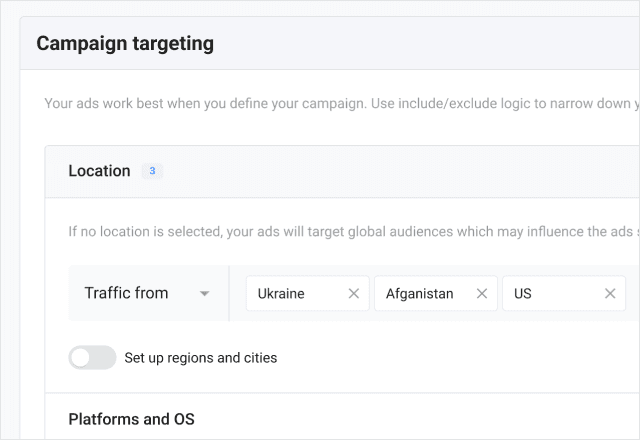

Based on targeting complexity feedback:

Simplified layout for easier scanning

Added detailed explanations and replaced icons with text labels

Further refinements:

Automated data collection to eliminate redundant fields

Moved traffic insights to separate page (rarely needed during creation)

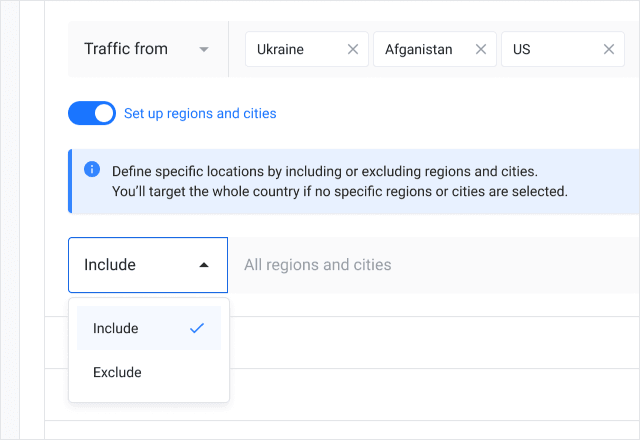

Based on user interview feedback, particularly concerning targeting complexities, I undertook the following measures:

Overhauled the layout for easy scanning and simplified targeting settings, making the process more intuitive and straightforward. |  |

Enhanced user guidance by offering detailed explanations and replacing ambiguous icons with clear text labels for the include/exclude options. |  |

Furthermore, I fine-tuned the layout and removed any unnecessary elements, achieving this through the subsequent actions:

Eliminated redundant fields, such as the category field, by automating data collection.

Segregated traffic insights to a separate page, recognizing that users rarely needed this information during campaign creation.

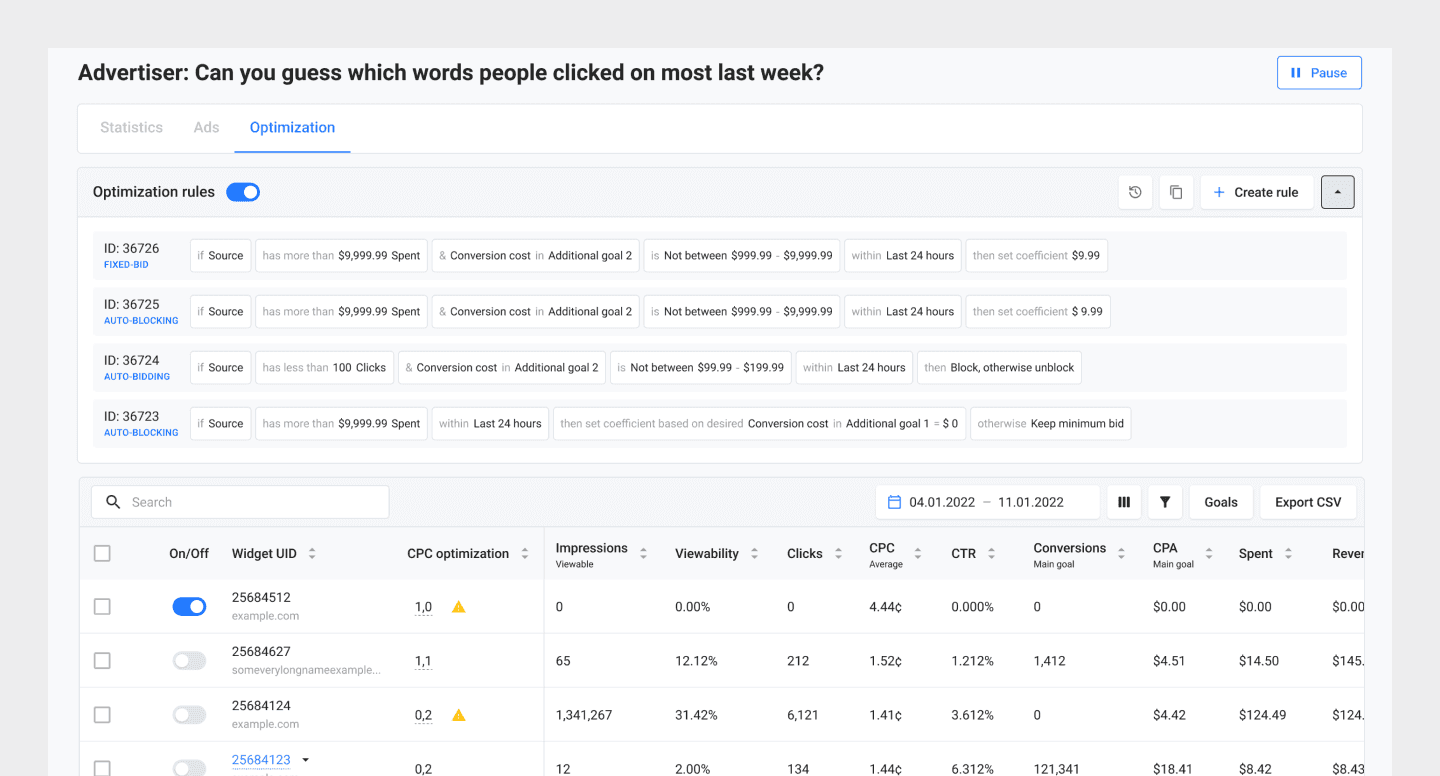

Campaign Optimization

This phase required extensive collaboration with the product manager and backend developers since interface improvements depended heavily on backend architecture.

Campaign optimization involves ongoing manual work—tracking stats, analyzing data, adjusting CPC, turning off underperforming sources. MGID introduced rule-based automation, but only 3% of advertisers used it.

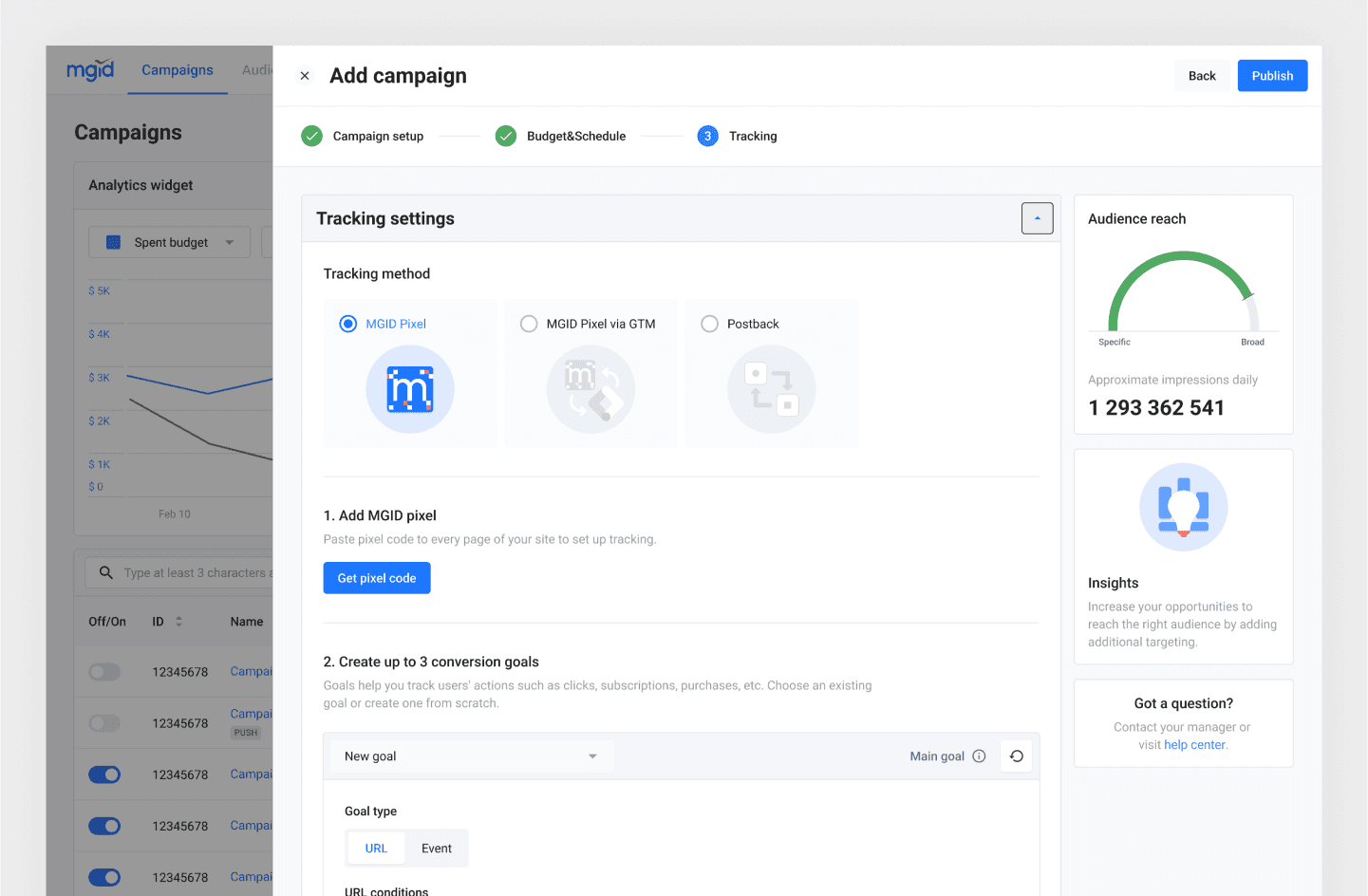

Interviews revealed that extensive tracking setup during campaign creation deterred automation adoption. Since the tracking flow had already been reconstructed, the goal was enhancing UX without major backend changes:

Improved discoverability and layout of automatic rules

Reordered columns based on interview insights

Moved goal settings to filters

Results and Next Steps

Internal testing with 7 subject experts in mid-April showed strong success. Feedback was mostly positive, and nearly all participants completed tasks independently without support.

The approach combined usability testing with interviews (1.5-2 hours per session) to evaluate task completion and gather post-task insights.

Improvements achieved:

Increased platform efficiency and campaign management speed

Reduced complexity, errors, and pain points

Metrics | Initial design | Updated design |

|---|---|---|

Time on task | 16.28 min | 14.13 min |

Success rate | 65% | 96% |

Difficulty (SEQ) | 3.4/7 | 5.6/7 |